The Accidental Revelation: How MODEL1 Was Discovered

DeepSeek has been quietly updating its FlashMLA repository on GitHub over recent weeks. However, developers recently noticed something unusual: hidden within the codebase were 28 references to an unknown model identifier called "MODEL1" scattered across 114 files.

Why MODEL1 Is Significant

The discovery is remarkable for several technical reasons:

Independent Architecture Branch MODEL1 appears in the code logic structure as a parallel and independent branch alongside "V32" (DeepSeek-V3.2), the current model. This parallel structure strongly suggests MODEL1 represents a fundamentally different architecture rather than a simple version iteration.

Timing Alignment The GitHub deployment timeline perfectly aligns with widespread industry rumors that DeepSeek would launch a new flagship model during the Spring Festival period in mid-February 2026. Earlier reports from international media outlets had specifically mentioned a "DeepSeek V4" launch during this timeframe.

Development Code Pattern The "MODEL1" nomenclature follows common industry practices for internal development codenames or first engineering versions of major new releases. Companies frequently use neutral identifiers like "MODEL1" for projects still under wraps.

Anniversary Context The timing coincides with the one-year anniversary of DeepSeek-R1's launch, making it a symbolically significant moment for the company to unveil its next-generation flagship.

Revolutionary Architecture Changes

The leaked code fragments reveal technical specifications that point to major architectural transformations across multiple dimensions of the model's design and operation.

Mixed-Precision Sparse Computing

One of the most significant innovations exposed in the code involves MODEL1's approach to sparse and dense computation:

Dual Processing Capability The codebase includes two new test files: test_flash_mla_sparse_decoding.py and test_flash_mla_dense_decoding.py . Their simultaneous presence confirms MODEL1 can perform sparse and dense computation in parallel—a sophisticated capability that enables the model to optimize resource usage dynamically.

Hybrid Precision Strategy The implementation reveals an ingenious approach to balancing efficiency and accuracy:

- Key-value cache storage : Uses FP8 precision (8-bit floating point) to minimize memory footprint

- Matrix multiplication operations : Uses bfloat16 precision to maintain computational accuracy

This mixed-precision design demonstrates that MODEL1 selectively applies sparsification to portions of data during inference, effectively reducing memory consumption while preserving calculation quality.

Ultra-Long Context Implications The memory optimizations throughout the FP8 decoding path strongly indicate MODEL1 is designed to handle extremely long context windows—addressing one of the most significant limitations developers face with current AI models.

Dimensional Architecture Redesign

The code in csrc/api/common.h reveals a fundamental shift in MODEL1's attention mechanism:

512-Dimensional Attention Heads MODEL1 configures attention head parameters at 512 dimensions, a notable departure from DeepSeek V3.2's 576-dimensional setup. This change signals a complete redesign of the Multi-Head Latent Attention (MLA) structure.

From Asymmetric to Standardized Previous V3 series models employed an asymmetric design combining:

- 128-dimensional Rotary Position Encoding (RoPE)

- 448-dimensional hidden layer dimensions

The shift to standardized 512-dimensional configuration suggests one of two strategic directions:

- Hardware Optimization : The new dimensions may align better with GPU computational patterns, maximizing throughput on modern hardware

- Compression Breakthrough : DeepSeek may have achieved technical advances in hidden layer compression rates that enable more efficient 512-dimensional representations

Either way, this architectural change represents a fundamental rethinking of how the model processes and represents information.

NVIDIA Blackwell Architecture Optimization

The code updates reveal extensive work preparing MODEL1 for NVIDIA's next-generation GPU architecture:

Dedicated Blackwell Interfaces The codebase includes new interfaces specifically targeting Blackwell instruction sets, including FMHACutlassSM100FwdRun . This level of optimization requires deep collaboration between DeepSeek and NVIDIA engineering teams.

CUDA 12.9 Requirement Documentation within the code explicitly states that running MODEL1 on B200 GPUs requires CUDA 12.9 environment support. This dependency on the latest CUDA version indicates the model leverages cutting-edge features unavailable in earlier releases.

Impressive Performance Metrics Embedded performance indicator data reveals notable computational capabilities:

- Sparse MLA operators on B200 : Achieve 350 TFLOPS (trillion floating-point operations per second) even in unoptimized states

- Dense MLA operators on H800 (SM90a architecture): Reach 660 TFLOPS throughput

These numbers demonstrate that MODEL1 is engineered to extract maximum performance from both current and next-generation hardware platforms.

Strategic Hardware Positioning By optimizing for Blackwell architecture before most competitors, DeepSeek positions MODEL1 to demonstrate significant performance advantages as B200 GPUs become available—potentially establishing a temporary computational moat around their technology.

Advanced Memory and Processing Mechanisms

While the code commits primarily focus on operator-level implementation, scheduling logic references several innovative features that extend MODEL1's capabilities.

Value Vector Position Awareness (VVPA)

Code repository structure indicates MODEL1 integrates Value Vector Position Awareness technology. This innovation addresses a critical weakness in traditional MLA architectures:

The Problem : Position Information Degradation In long-text processing scenarios, conventional MLA structures gradually lose position information as context length increases. This degradation reduces the model's ability to maintain accurate understanding of where information appears in extended documents.

The Solution : Position-Aware Value Vectors VVPA technology maintains positional awareness throughout the value vectors used in attention mechanisms, preserving the model's understanding of information location even in extremely long contexts.

Practical Impact This capability is crucial for tasks involving:

- Large-scale code repositories spanning thousands of files

- Extensive documentation requiring precise cross-referencing

- Long-form content analysis maintaining structural awareness

- Multi-document reasoning preserving source attribution

Engram Memory Mechanism

Code comments reference a technology called the "Engram mechanism," though implementation details remain incomplete in public code commits.

Deployment Clues The mechanism's placement within distributed processing modules suggests its function likely relates to:

- Distributed storage optimization : Efficiently managing memory across multiple computational nodes

- Advanced key-value compression : Reducing memory overhead while maintaining access speed

- High-throughput support : Meeting MODEL1's performance requirements for rapid inference

Research Foundation DeepSeek's research team recently published a technical paper specifically on Engram technology. Industry observers noted at the time that Engram modules might become integral components of DeepSeek V4, signaling architectural-level advances in memory and reasoning coordination.

Speculative Capabilities If Engram functions as theorized, it could enable:

- More efficient long-term context retention

- Faster retrieval of relevant information from extensive context windows

- Reduced computational overhead for maintaining large context states

- Improved consistency in reasoning across extended interactions

Superior Coding Performance Claims

The architectural optimizations revealed in the code align with earlier reports about MODEL1's practical capabilities, particularly in software development tasks.

Reported Competitive Advantages

Previous leaks and industry sources have claimed that DeepSeek V4's coding performance surpasses established competitors:

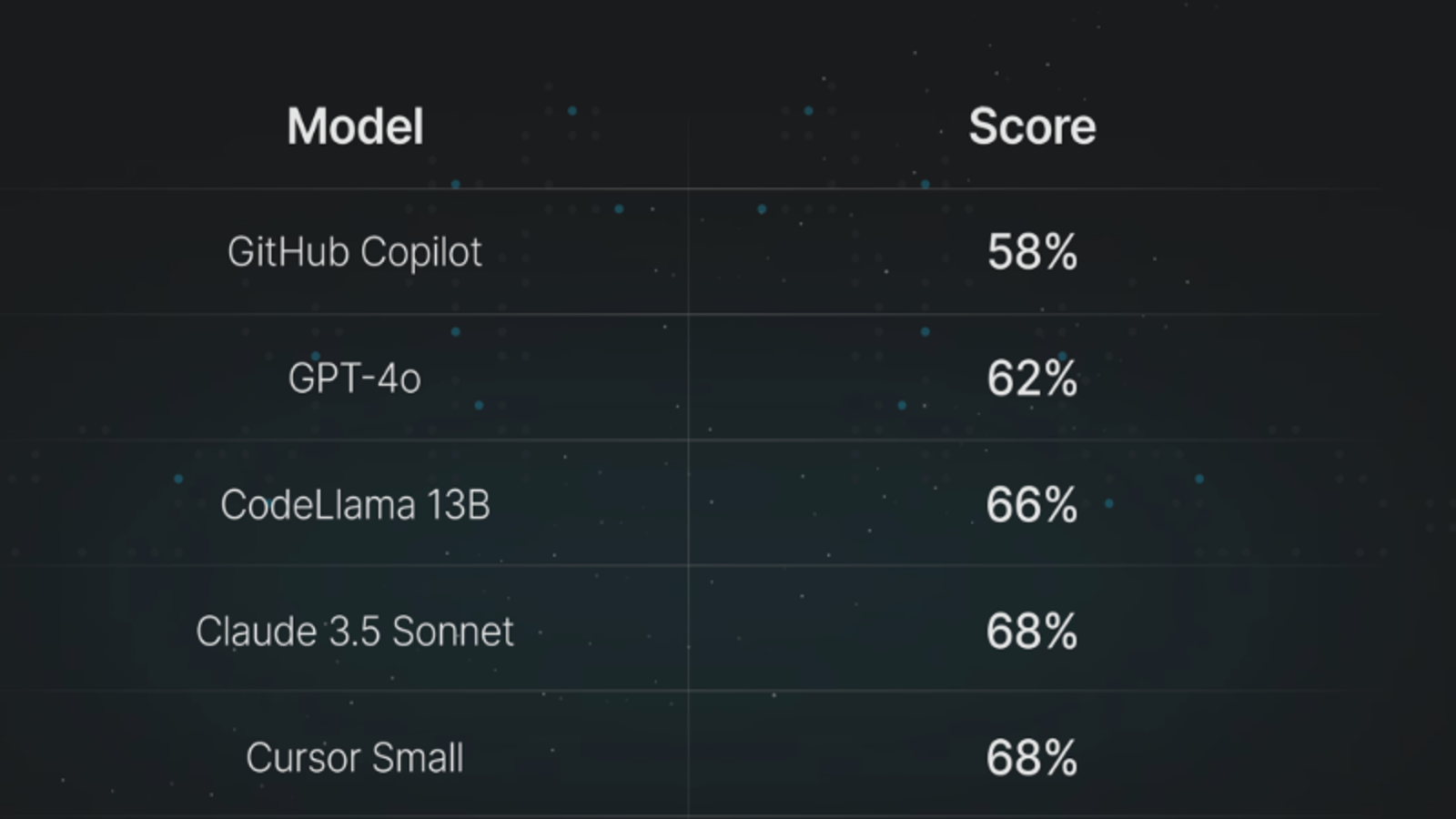

Model Comparisons MODEL1 allegedly outperforms both Claude and GPT series models on coding benchmarks, suggesting DeepSeek has achieved meaningful advances in code understanding and generation.

Enterprise-Scale Capabilities Beyond simple code completion, reports indicate MODEL1 possesses:

- Complex project architecture handling : Understanding and working with sophisticated system designs

- Large codebase navigation : Effectively processing and reasoning about extensive codebases with thousands or tens of thousands of files

- Engineering-grade reliability : Production-ready code generation suitable for professional development workflows

How Architecture Enables Coding Excellence

The architectural features revealed in the leaked code directly support these coding capabilities:

Long Context Processing The sparse computing and memory optimizations enable MODEL1 to process entire modules or subsystems as single contexts—critical for understanding architectural patterns and dependencies.

Precision Preservation The hybrid FP8/bfloat16 precision strategy maintains calculation accuracy where it matters most (matrix operations) while reducing memory pressure—essential for generating correct code.

Hardware Performance Blackwell optimization means MODEL1 can perform more computations per second, enabling more sophisticated reasoning about code structure and logic within acceptable response times.

Position Awareness VVPA technology helps the model maintain understanding of code structure, function locations, and dependency relationships across large files or multiple related files.

Global Anticipation and Industry Impact

The MODEL1 discovery has generated extraordinary excitement across international AI communities, with discussion threads proliferating across social media platforms and developer forums.

The "China AI Standing Up" Narrative

Yesterday, Hugging Face—the world's largest AI open-source community—published a special retrospective titled "One Year Since the DeepSeek Moment," examining R1's impact over the past twelve months.

Historic Significance Hugging Face noted that R1 represented the first time a Chinese-developed open-source model reached mainstream global rankings. Throughout the subsequent year, R1 became a critical reference benchmark whenever new models launched.

Platform Dominance R1 rapidly rose to become the most popular model in Hugging Face's history, and the platform's most favored models are no longer predominantly American-developed products—marking a significant shift in the AI landscape.

Breaking Down Barriers

Hugging Face's analysis highlighted how R1's true value lay in lowering barriers to advanced AI capabilities:

Technical Barriers By openly sharing reasoning paths and post-training methods, R1 transformed high-level reasoning previously locked behind closed APIs into downloadable, distillable, and fine-tunable engineering assets. Teams no longer needed to train massive models from scratch to achieve sophisticated reasoning capabilities.

Application Barriers R1's MIT license made usage, modification, and redistribution straightforward. Companies dependent on closed models began deploying R1 directly into production. Distillation, retraining, and domain-specific adaptation became routine engineering work rather than specialized projects.

Psychological Barriers When the question shifted from "Can we do this?" to "How do we do this well?", decision-making changed fundamentally across many organizations. For the Chinese AI community specifically, this represented a rare moment of sustained global attention—significant for an ecosystem long viewed as followers rather than leaders.

Ecosystem Acceleration

Hugging Face concluded: "One year after R1's release, we're witnessing not merely an influx of new models, but the accelerated formation of a vibrant Chinese AI open-source ecosystem."

This context makes MODEL1/V4's anticipated launch particularly significant. If the model delivers on the capabilities suggested by its architecture, it could further solidify China's position as an AI innovation leader rather than an implementer of Western innovations.

What MODEL1 Means for the AI Industry

The architectural revelations and timing of MODEL1's discovery carry implications that extend far beyond DeepSeek as a company.

Competitive Dynamics Shift

MODEL1's advanced features create pressure across the AI industry:

Performance Expectations If MODEL1 delivers coding performance superior to Claude and GPT models while handling ultra-long contexts, competitors must respond with their own architectural innovations or risk losing market share.

Cost-Performance Ratios DeepSeek's established pattern of offering competitive capabilities at lower price points means MODEL1 could force industry-wide pricing reconsideration—particularly if hardware optimizations enable efficient operation.

Open-Source Momentum Should DeepSeek release MODEL1 under permissive licensing similar to R1, it would accelerate the trend toward powerful open-source alternatives to proprietary models.

Technical Innovation Direction

MODEL1's architecture provides insights into where AI development is heading:

Hybrid Precision Computing The strategic use of different precision levels for different operations demonstrates that future models will increasingly employ sophisticated mixed-precision strategies rather than uniform precision across all computations.

Hardware Co-Design Deep optimization for specific GPU architectures (Blackwell) signals that future AI models will be co-designed with hardware rather than treating compute infrastructure as a commodity.

Memory Hierarchy Innovation Advanced mechanisms like Engram suggest the industry is moving beyond simple context windows toward more sophisticated memory architectures that enable genuinely long-term context maintenance.

Sparse-Dense Parallelization The ability to perform sparse and dense computation simultaneously represents an evolution in model efficiency—future systems will likely adopt similar hybrid approaches.

Geopolitical Technology Landscape

MODEL1's emergence continues reshaping the narrative around AI development geography:

Multi-Polar Innovation The era of AI innovation dominated by a few American companies is clearly ending. Multiple centers of excellence are emerging, creating a more distributed and resilient global AI ecosystem.

Competitive Pressure Benefits Increased competition from Chinese AI companies accelerates innovation globally, driving improvements in capabilities, efficiency, and accessibility across all markets.

Talent Distribution World-class AI research and engineering is no longer concentrated in Silicon Valley—it's distributed across Beijing, Hangzhou, and other Chinese cities, as well as emerging hubs worldwide.

Preparing for MODEL1/V4 Launch

Organizations and developers should take specific steps to prepare for evaluating MODEL1 when it officially launches:

Technical Preparation

Infrastructure Assessment Evaluate whether your current GPU infrastructure can support MODEL1's requirements:

- CUDA version compatibility (likely 12.9+ required for optimal performance)

- Available GPU memory for long-context processing

- Distributed computing capabilities if deploying at scale

Benchmark Framework Develop testing scenarios that leverage MODEL1's distinctive capabilities:

- Ultra-long context tasks (10,000+ tokens) where current models struggle

- Complex coding projects requiring architectural understanding

- Sparse computation scenarios that should benefit from hybrid precision

Integration Planning Consider how MODEL1 might integrate into existing workflows:

- API compatibility with current tooling

- Migration paths from existing AI coding assistants

- Training requirements for development teams

Strategic Considerations

Competitive Analysis Understand how MODEL1's capabilities compare to your current AI solutions:

- Performance on tasks critical to your workflows

- Cost-effectiveness at your usage scale

- Licensing terms and deployment flexibility

Risk Assessment Evaluate security, compliance, and operational factors:

- Data handling policies for proprietary code

- Regulatory compliance in your jurisdiction

- Vendor diversification strategy

Pilot Program Design Plan a structured evaluation:

- Select representative use cases

- Define success metrics

- Establish evaluation timeline

- Gather systematic feedback

- Make data-driven adoption decisions

The Broader Trajectory

MODEL1's leaked architecture provides a window into where AI model development is headed over the next 12-24 months.

Expected Industry Responses

Competitors will likely announce their own architectural innovations:

Extended Context Windows MODEL1's apparent ultra-long context capabilities will pressure other providers to match or exceed this functionality.

Hardware-Specific Optimization Expect more models optimized for specific GPU architectures as vendors recognize the performance advantages of hardware co-design.

Open-Source Alternatives If MODEL1 succeeds, more organizations may release competitive open-source models to avoid dependence on any single provider.

Developer Ecosystem Evolution

The developer community will adapt to MODEL1's capabilities:

Workflow Transformation Ultra-long context windows will enable new development patterns where AI assistants maintain awareness of entire projects rather than isolated files.

Quality Expectations If MODEL1 delivers superior coding performance, developer expectations for AI assistance quality will rise across the board.

Tool Integration Development environment vendors will create deeper integrations to leverage MODEL1's distinctive features effectively.

Key Takeaways

The MODEL1 discovery and analysis reveal several critical insights:

For Developers

- Prepare for ultra-long context capabilities that may transform how you interact with AI coding assistants

- Expect superior coding performance based on architectural optimizations specifically targeting software development

- Consider evaluation once officially launched to compare against current tools

- Monitor hardware requirements as MODEL1 may benefit significantly from next-generation GPUs

For Organizations

- Assess strategic implications of potentially superior and cost-effective AI coding capabilities

- Plan evaluation frameworks that objectively measure MODEL1's performance on relevant tasks

- Consider vendor diversification as competitive landscape becomes more multi-polar

- Prepare for industry shift toward open-source, high-capability AI models

For the AI Industry

- Architectural innovation accelerating beyond simple parameter scaling

- China establishing leadership in specific AI capability domains

- Open-source models closing gap with proprietary alternatives

- Hardware-software co-design becoming critical competitive factor

Conclusion

The accidental exposure of MODEL1 code in DeepSeek's GitHub repository offers a fascinating glimpse into the next generation of AI model architecture. The fundamental changes revealed—from 512-dimensional attention heads and mixed-precision sparse computing to Blackwell GPU optimization and innovative memory mechanisms—suggest MODEL1 represents a comprehensive architectural overhaul rather than incremental improvement.

If the model delivers on the capabilities its architecture promises, particularly in coding performance and ultra-long context handling, it could significantly impact the competitive landscape. Combined with DeepSeek's pattern of aggressive pricing and open-source release, MODEL1/V4 may accelerate industry-wide shifts toward more capable, efficient, and accessible AI systems.

The mid-February 2026 launch window coinciding with R1's one-year anniversary creates symbolic significance for this release. One year ago, DeepSeek demonstrated that Chinese AI companies could produce models competitive with or superior to Western alternatives. MODEL1 appears positioned to reinforce that message with even more dramatic architectural innovations.

For the global developer community, MODEL1's imminent arrival is welcome news regardless of whether it becomes the dominant tool. More competition, innovation, and open-source alternatives benefit everyone working to leverage AI assistance in software development. As the February launch approaches, attention will focus intensely on whether MODEL1's real-world performance matches the promise revealed in its leaked architecture.