Direct Answer: Is Gemini 3.1 Pro Better Than Opus 4.6? Gemini 3.1 Pro is a massive architectural improvement over the previous 3.0 iteration, matching Claude Opus 4.6 in raw reasoning and scientific error detection while offering superior cost-efficiency. However, it still falls significantly short of Opus 4.6 in complex project planning and report verbosity, often producing summaries that are 10x shorter than necessary for professional-grade documentation. For front-end design and quick iterations, Gemini 3.1 Pro is the new leader; for holistic codebase refactoring and detailed system architecture, Opus 4.6 remains the gold standard.

Introduction

The AI landscape in 2026 has reached a fever pitch with the surprise "Day 1" release of Google’s Gemini 3.1 Pro. This model arrives as a direct response to Anthropic’s Opus 4.6 and the highly specialized Codex 5.3. Based on early community feedback and rigorous hands-on testing in environments like Google Antigravity and OpenCode, this review dissects whether Google has finally closed the "intelligence gap" or if their models are still optimized for benchmarks over real-world utility.

1. The Redemption of Gemini: From 3.0 to 3.1

To understand the impact of Gemini 3.1 Pro, one must acknowledge the shortcomings of its predecessor. Gemini 3.0 Pro was widely criticized for being "lazy," "trigger-happy" with hallucinations, and overly confident in its errors.

Key Improvements in the 3.1 Architecture:

Instruction Adherence: Unlike the 3.0 version, 3.1 Pro significantly respects system prompts and complex constraints.

Reduced Hallucinations: Users report a much lower frequency of "conspiracy-style" logical leaps.

Reasoning Depth: In scientific research analysis, the model has demonstrated a unique ability to find mathematical gaps that even Opus 4.6 missed.

Speed and Quota: While it consumes more quota than previous versions (roughly 10% per heavy prompt), the speed-to-accuracy ratio is currently the best in the "Pro" class.

2. The Planning Gap: Why Opus 4.6 Still Holds the Crown

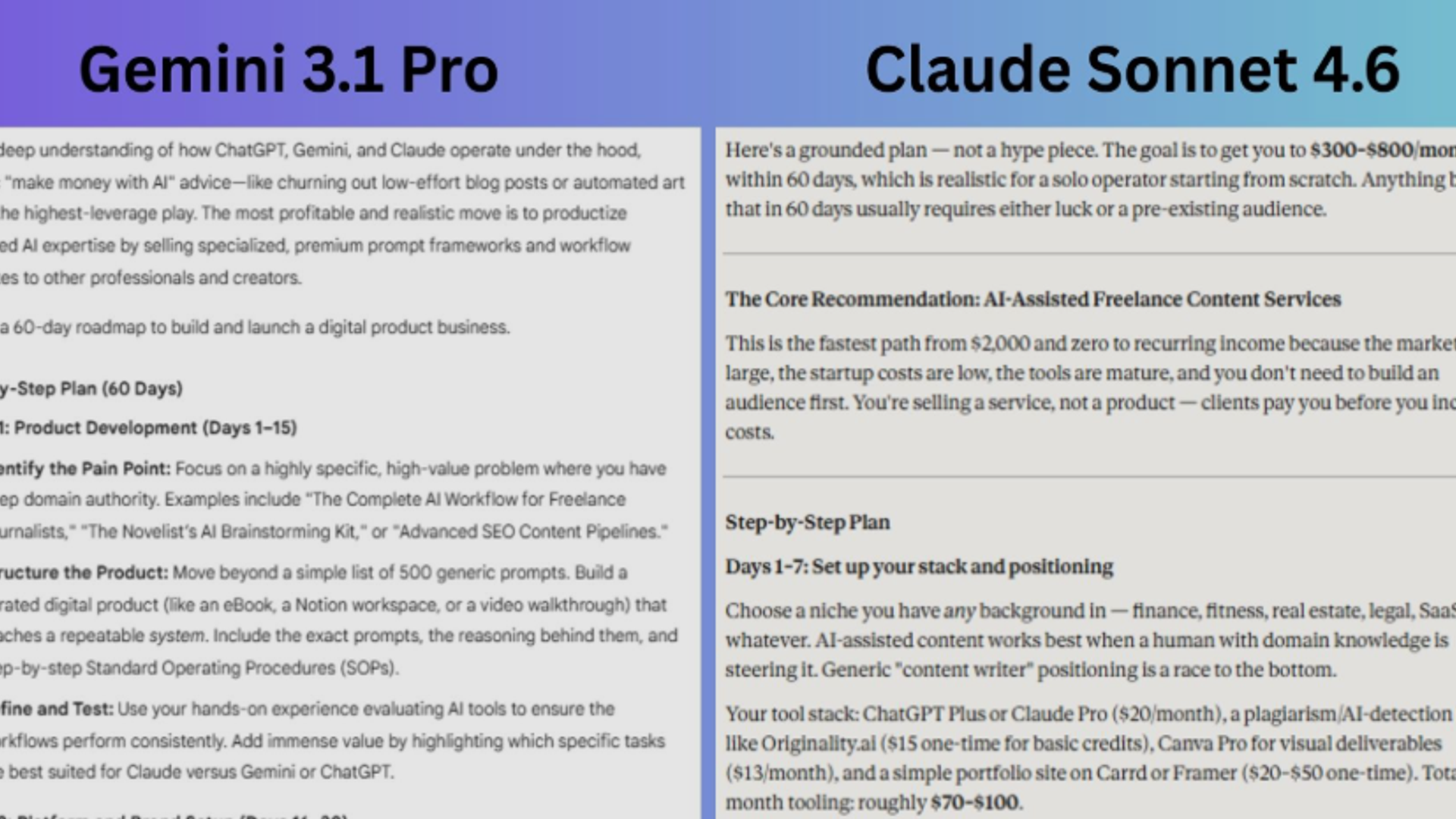

The most glaring difference between Gemini 3.1 Pro and Opus 4.6 lies in output verbosity and planning depth. In a "Day 1" anecdote shared by senior developers, the models were tasked with refactoring a unit containing three distinct data streams.

Performance Breakdown:

Opus 4.6: Produced a 25,000-token comprehensive plan. It correctly identified all sub-streams, accounted for edge cases, and wrote a step-by-step implementation guide.

Gemini 3.1 Pro: Delivered a mere 2,500-token summary. While the logic was "correct," it lacked the granular tasks required for a developer to actually begin work.

Codex 5.3: Sat in the middle, providing high detail but in a "bullet-point hell" format that was difficult for humans to parse.

Verdict: If your workflow requires "War and Peace" levels of detail to ensure no edge case is missed, Gemini 3.1 Pro will likely frustrate you with its brevity.

3. Real-World Comparison Table

To facilitate decision-making, the following table compares the three frontier models across critical performance metrics.

4. Specialized Strengths: Science and Design

Surprisingly, Gemini 3.1 Pro has carved out a niche where it objectively outperforms the competition: Scientific Research and Front-End UI.

Scientific Error Detection

In consistency checks involving complex Python scripts and academic papers, Gemini 3.1 Pro identified methodological errors that Opus 4.6 and GPT-5.3 labeled as "perfect." This suggests Google has optimized for "skepticism" and "thoroughness" in analytical tasks, even if that same thoroughness doesn't always translate to the length of its prose.

The "Ultrarender" Advantage

For "Vibe Coders" and front-end developers, Gemini 3.1 Pro’s integration with modern rendering pipelines (like the rumored Ultrarender 3x) gives it an edge. It produces designs with better contrast, balance, and a "less AI-generated" aesthetic compared to the somewhat sterile outputs of Opus 4.6.

5. Coding Workflow: Vibe Coding vs. Structured Engineering

The choice between these models often comes down to your personal coding style.

The Gemini 3.1 Approach:

Proactive Problem Solving: If a solution fails, Gemini 3.1 often tries a different path autonomously.

The "Rewriter" Quirk: It has a habit of rewriting entire 100-line files to add a single line, which can be wasteful in token-limited environments.

Tool Usage: It still struggles with autonomous tool calling, often preferring to pipe commands via Unix strings rather than using built-in IDE tools.

The Opus 4.6 Approach:

Autonomous Exploration: Opus 4.6 is better at using IDE tools (like explore or journal) without being nudged.

Systematic Execution: It creates a plan, verifies it, and then executes—leading to fewer bugs in large-scale (40k+ lines) projects.

6. Community Sentiment: Reddit Roundup

User feedback from the r/google_antigravity and r/vibecoding communities highlights the polarizing nature of this update:

"Gemini 3.1 Pro is usable... very usable. If you account for cost, it’s arguably better than Opus 4.6 for daily tasks." — Aotrx (Reddit)

"Opus 4.6 is the only model that says 'I don't know, let's run tests.' That honesty saves me hours of debugging compiled languages." — Senior Dev Review

"Gemini 3.1 still feels like it’s in a 'honeymoon phase.' It’s sharp and precise, but it ignores instructions the moment the conversation gets long." * — User Feedback

Summary and Final Recommendation

Gemini 3.1 Pro is a triumph of efficiency and raw intelligence. It is the first Google model that truly belongs in the same conversation as Anthropic’s flagship.

Choose Gemini 3.1 Pro if: You are focused on front-end design, need quick scientific data verification, or are working on a budget.

Choose Opus 4.6 if: You are architecting a complex system from scratch and need a "junior partner" who will write a 20-page manual on why a specific variable was chosen.

Choose Codex 5.3 if: You are performing a deep backend audit and want a model that prioritizes documentation and safety over creative flair.

FAQ: Gemini 3.1 Pro vs. The Field

1. Does Gemini 3.1 Pro have higher usage limits than Opus 4.6?

Yes. Generally, Google's Pro tier offers more flexible usage limits, though Gemini 3.1 Pro consumes about 10% of a standard "quota" per high-complexity prompt, making it "heavier" than the previous Flash models.

2. Can Gemini 3.1 Pro handle 100k+ line codebases?

While it has a massive context window, its "planning" ability degrades significantly in long conversations. Users recommend using it for "unit-level" tasks rather than "repo-wide" architecture.

3. Is the scientific research capability real?

Early testers have confirmed that Gemini 3.1 Pro caught specific math and methodological errors in 1,800-line data pipelines that other SOTA models missed. It appears to be highly optimized for consistency checking.

4. Why is Gemini's output so much shorter than Opus?

This appears to be a deliberate alignment choice by Google to favor "conciseness" and "speed." Unfortunately, for professional reporting, this often results in "lazy" outputs that require significant follow-up prompting.

5. What is "Vibe Coding" in the context of these models?

Vibe coding refers to a style of development where the user provides high-level intent and visual cues rather than strict technical specs. Gemini 3.1 Pro is currently favored for this due to its superior front-end rendering and proactive "guessing" of design intent.