| Project | Primary Focus | Best Use Case | License |

|---|---|---|---|

| PyTorch | Deep Learning Research | Rapid prototyping & SOTA models | BSD |

| TensorFlow | Production ML | Large-scale, enterprise MLOps | Apache 2.0 |

| Bifrost | LLM Gateway | Managing multiple model providers | Apache 2.0 |

| E2B Sandboxes | Agent Execution | Secure code execution for agents | MIT |

| Scikit-learn | Classical ML | Statistical modeling & data mining | BSD |

| Claude Code | Agentic Coding | Automating codebase refactors | Commercial/OSS |

| Dataline | Private Data Science | SQL generation & local reporting | Apache 2.0 |

What are the key projects transforming AI?

The 16 open-source projects currently transforming AI and machine learning include Bifrost (unified LLM gateway), Claude Code (agentic pair programmer), Clawdbot (desktop AI assistant), E2B sandboxes (secure agent environments), Dataline (local SQL-to-report tool), Swirl Connect (RAG search indexer), Upscayl (image enhancement), Nyro (OS productivity automation), Geppetto (Slack documentation enhancer), Agent Skills (modular agent capabilities), PyTorch (research-standard deep learning), TensorFlow (production-grade ML), Scikit-learn (classical ML), Keras (high-level DL), Hugging Face Transformers (model repository), and Oryx (real-time decision engines). These tools empower developers to build everything from autonomous agent swarms to sophisticated local data analysis pipelines without vendor lock-in.

Introduction

The most innovative software of 2026 has emerged not from closed labs, but from the collaborative world of open source. From fine-tuning massive models to building autonomous agentic frameworks, the open-source ecosystem is now the core of enterprise computing and cloud infrastructure.

1. Essential Categories of Open Source AI Projects

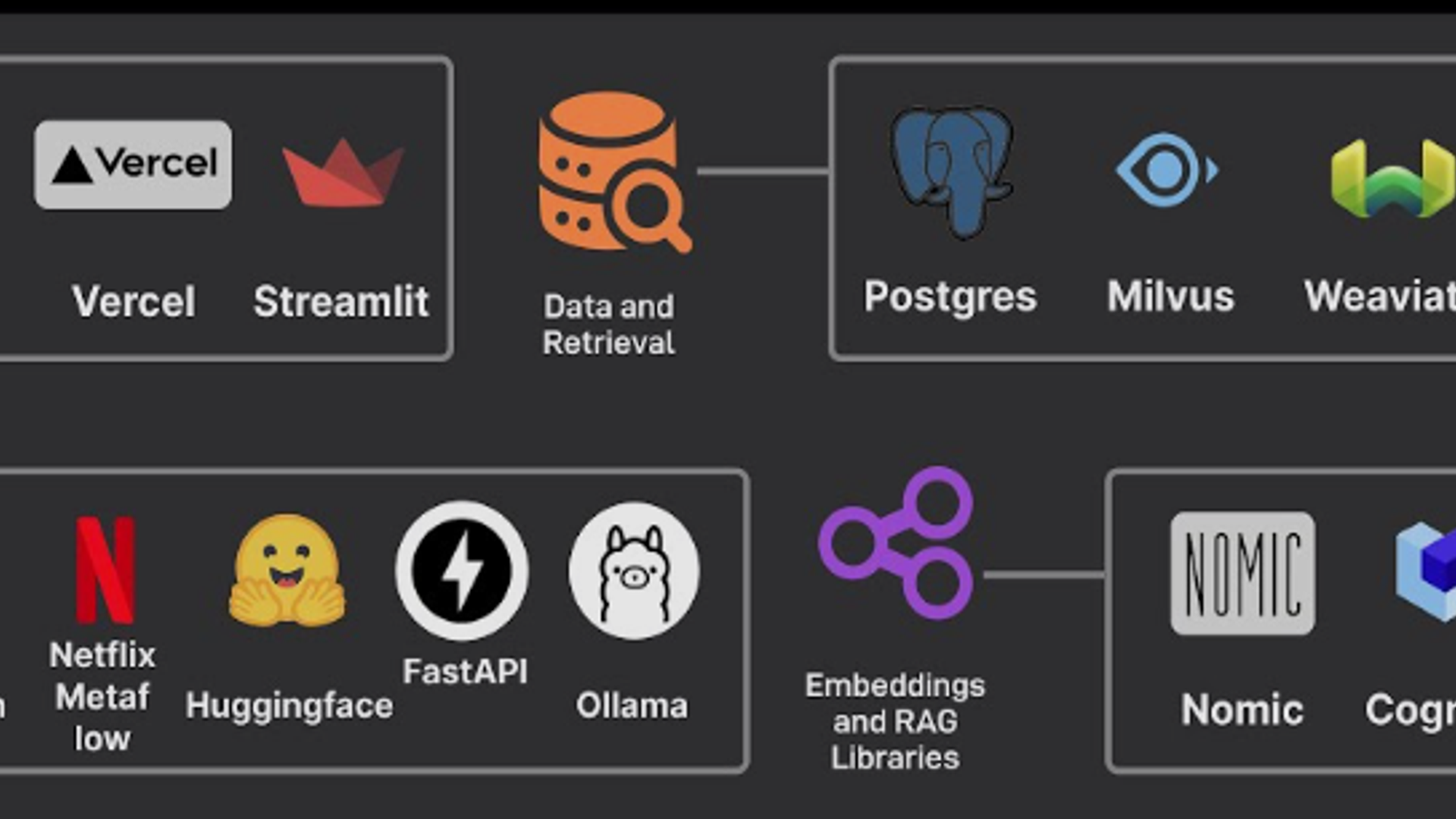

To better understand the landscape, these projects can be categorized by their primary function in the AI development lifecycle.

Model Orchestration and Gateways

- Bifrost: A fast, unified gateway to over 15 LLM providers. It uses an OpenAI-compatible API to abstract away differences between models, incorporating governance, caching, and load balancing.

- Agent Skills: A library designed to give autonomous agents modular "skills," such as interacting with specific APIs or performing structured data extraction.

Developer Productivity and Coding Assistants

- Claude Code: An agentic pair programmer trained on major languages. It digests your local codebase and provides natural language refactoring and feature additions.

- Nyro: A productivity tool built on Electron that automates mundane OS tasks like window resizing and data synchronization between apps.

- Geppetto: A Slackbot that connects team channels to LLMs to enhance and clean up casual conversations into structured documentation.

Data Analysis and Secure Environments

- E2B Sandboxes: Provides secure, isolated cloud environments where LLMs can execute code, browse the web, and manage cloud infrastructure safely.

- Dataline: Uses LLMs to generate SQL commands for local databases, creating data science reports locally to ensure data sovereignty.

- Swirl Connect: Simplifies Retrieval-Augmented Generation (RAG) by linking standard databases with LLMs and search indices without extensive reformatting.

2. Comparison of Top AI Frameworks and Tools

The following table compares the leading frameworks and tools to help you decide which to integrate into your stack.

3. How to Implement Open Source AI in Your Workflow

Transitioning to an open-source AI stack involves several strategic steps:

- Define Your Sovereignty Needs: Determine if you need "Open Weights" (running models locally) or just "Open Tooling" (using open frameworks to call cloud APIs).

- Select a Foundation Framework: Use PyTorch for flexibility in model design or TensorFlow for established production pipelines.

- Implement a Gateway: Use Bifrost to avoid vendor lock-in, allowing you to swap between providers like Anthropic, OpenAI, or local Llama instances effortlessly.

- Secure Agent Actions: If building agents, deploy E2B sandboxes to ensure AI-generated code doesn't compromise your host system.

- Automate Documentation: Integrate Geppetto or Claude Code to maintain code quality and documentation standards autonomously.

4. The Impact of Agentic AI in 2026

The shift from traditional machine learning to agentic AI represents a fundamental change in enterprise technology. Traditional ML requires human oversight at every step; however, modern agentic systems, powered by projects like Clawdbot and E2B , allow for autonomous orchestration of complex workflows.

"The biggest security risk in 2026 is no longer human behavior; it's agent behavior." — Nadav Cornberg, CEO of Eve Security.

Open standards are essential to harden the protocols these agents use, making transparency a functional requirement rather than just a philosophical preference.

5. FAQ: Open Source AI and Machine Learning

Q: Why should I choose open-source AI over proprietary models like GPT-5?

A: Open-source models (like Llama 4 or Qwen 3) offer up to 90% cost savings on inference, eliminate vendor lock-in, and provide data sovereignty by allowing you to run models on your own hardware.

Q: What is the difference between "Open Weights" and "Open Source"?

A: "Open Source" usually refers to the code (tooling, frameworks), while "Open Weights" means you can download the trained model parameters. Truly "Open AI" includes transparency in the training pipeline and datasets.

Q: Is PyTorch or TensorFlow better for 2026 development?

A: PyTorch remains the leader for research and rapid prototyping due to its "Pythonic" nature. TensorFlow is often preferred by large enterprises for its mature MLOps and production-grade deployment ecosystem.

Q: How do I keep my data private when using AI?

A: Tools like Dataline allow you to perform data analysis locally. By running open-weights models on local GPUs, your sensitive data never leaves your infrastructure.

Conclusion

The 16 projects highlighted here are more than just tools; they are the building blocks of a transparent and accessible future. Whether you are enhancing digital art with Upscayl or orchestrating a 100-agent swarm with Bifrost and E2B , the open-source community provides the expertise and the infrastructure to build without limits.