Google Gemini 3.1 Pro is Google DeepMind's latest flagship AI model, officially released in preview on February 19, 2025. It scores 77.1% on the ARC-AGI-2 abstract reasoning benchmark — more than double its predecessor — and outperforms GPT-5.2 and Claude Opus 4.6 across multiple key tests, all at the same price as the previous generation.

This article covers Gemini 3.1 Pro's five core upgrades, head-to-head benchmark comparisons with competing models, real-world performance strengths and weaknesses, which users and industries will benefit most, and a step-by-step guide to getting started today. If you are evaluating frontier AI models for personal, development, or enterprise use, this is the complete breakdown you need.

What Is Gemini 3.1 Pro and Why Does It Matter?

Just three months after releasing Gemini 3 Pro, Google DeepMind has delivered what may be the most significant mid-cycle upgrade in the competitive AI model space. Gemini 3.1 Pro is not an incremental patch — it represents a fundamental rearchitecting of reasoning depth, multimodal fluency, context handling, and output quality, across every core dimension simultaneously.

The model's headline achievement is a 77.1% score on ARC-AGI-2, the industry's most demanding abstract reasoning benchmark — more than double the previous generation's 31.1% and well ahead of Claude Opus 4.6 (68.8%). On the graduate-level academic reasoning test Humanity's Last Exam (no tools), it scores 44.4%, topping both Claude Opus 4.6 (40.0%) and GPT-5.2 (34.5%).

Equally important: Google has not raised the price. Developers and enterprises get substantially more capability at the exact same cost — making this release one of the most competitive value propositions in the current AI landscape.

Five Core Upgrades in Gemini 3.1 Pro

1. Reasoning Capability — More Than Doubled

The most transformative upgrade is in abstract and academic reasoning:

- ARC-AGI-2 score: 77.1% (up from 31.1% in the previous generation)

- Humanity's Last Exam (no tools): 44.4% — outperforming all major competitors

- Handles graduate-level cross-disciplinary tasks in mathematics, physics, chemistry, and biology

- In clinical data analysis testing, accuracy jumped from 47% to 67%, eliminating the statistical noise misclassification problems that plagued earlier models

- Can identify internal contradictions in complex problems and present multiple valid interpretations

Important caveat: When external tools (search + code execution) are enabled, Claude Opus 4.6 regains the advantage in complex agentic tasks.

2. Long-Context Window — Up to 1 Million Tokens

- Supports a context window of up to 1,000,000 tokens

- Maintains stable performance (84.9% information extraction accuracy) within 128,000 tokens

- Performance degrades at the full 1M token range, but still significantly exceeds Claude 3.5 (200K) and GPT-4 (128K)

- Eliminates the need to split large documents into chunks or repeatedly re-prompt for context

- Suitable for full codebase analysis, complete book ingestion, multi-contract legal comparison, and long-session conversations without “memory loss”

3. Native Multimodal Architecture

Unlike models where multimodal capability was added post-hoc, Gemini 3.1 Pro is built from the ground up to process all modalities in a unified architecture:

- Natively processes text, image, video, and audio — no tool-chaining required

- File upload limit increased from 20MB to 100MB

- New YouTube URL support — analyze video content directly without downloading or compressing files

- Can process a 30-minute product demo video, generate a structured transcript, extract key timestamps, and produce implementation-ready UI code — all in a single conversation

- Generates vector-quality SVG animations from design inputs, infinitely scalable with minimal file size

4. Code Generation — Competition-Grade Algorithm Design

- Terminal-Bench 2.0 score: 68.5%, significantly ahead of GPT-5.2's 54.0%

- Algorithm design performance comparable to GPT-5.3-Codex in competitive programming scenarios

- Consistent 2–3 second response times for coding tasks

- Capable of optimizing fine-tuning scripts — demonstrated reduction of runtime from 300 seconds to 47 seconds

- Generates complete unit tests alongside code

- Limitation: Lags behind GPT-5.3-Codex and Claude Opus 4.6 on large-scale software engineering tasks such as full codebase refactoring and complex bug remediation

5. Output Quality and Pricing — More for the Same Cost

- Maximum output tokens increased from 8,000 to 65,000 — eliminates truncation in long documents and multi-file code generation

- New three-tier reasoning mode (Low / Medium / High):

- Low: Speed-optimized for simple queries and conversational tasks

- Medium: Balanced performance for most general use cases

- High: Depth-optimized for complex reasoning and professional-grade analysis

- Pricing remains unchanged from the previous generation:

- Input (≤200K tokens): $2.00 per million tokens

- Output (≤200K tokens): $12.00 per million tokens

- Input/Output (>200K tokens): Double the above rates

Benchmark Comparison: Gemini 3.1 Pro vs. GPT-5.2 vs. Claude Opus 4.6

| Benchmark | Gemini 3.1 Pro | Claude Opus 4.6 | GPT-5.2 |

|---|---|---|---|

| ARC-AGI-2 (abstract reasoning) | 77.1% | 68.8% | Not disclosed |

| Humanity's Last Exam (no tools) | 44.4% | 40.0% | 34.5% |

| Terminal-Bench 2.0 (coding) | 68.5% | Not disclosed | 54.0% |

| Clinical data accuracy | 67% | — | — |

| Context window | 1M tokens | 200K tokens | 128K tokens |

| Max output tokens | 65K | 32K | 16K |

| Agentic tasks (with tools) | Second | First | Third |

| Large-scale software engineering | Third | First | Second |

| Native multimodal architecture | Yes | No | No |

| Pricing (input, ≤200K) | $2.00/M | $15.00/M | $10.00/M |

Where Gemini 3.1 Pro Excels — and Where It Falls Short

Strengths: Where It Leads the Field

- Pure reasoning tasks — abstract logic, interdisciplinary academic analysis, research-grade problem solving

- Multimodal workflows — video summarization, design-to-code conversion, audio transcription, native SVG generation

- Long-document processing — up to 128K tokens with stable accuracy; 1M token ceiling for maximum coverage

- Competitive algorithm design — significantly ahead of GPT-5.2 on terminal coding benchmarks

Weaknesses: Where to Choose Alternatives

- Tool-augmented agentic workflows — Claude Opus 4.6 outperforms when search and code execution tools are active

- Large-scale software engineering — GPT-5.3-Codex and Claude Opus 4.6 perform better on SWE-Bench Pro and complex codebase refactoring

- Real-time knowledge — knowledge cutoff is January 2025; requires RAG integration or search tools for events after that date

- Cybersecurity-sensitive deployments — has reached a security “alert threshold” in this domain; enterprise deployments in critical infrastructure require additional filtering layers

Who Should Use Gemini 3.1 Pro? Four User Groups That Benefit Most

1. Researchers and Professional Specialists (Healthcare, Law, Science)

The model's upgraded reasoning accuracy directly reduces manual verification workloads. Clinical staff can analyze complex patient data with higher precision, legal professionals can cross-reference multi-document contracts in a single session, and academic researchers can work through multi-step derivations across disciplines. The jump from 47% to 67% accuracy in clinical data analysis alone represents a meaningful productivity gain in high-stakes professional environments.

2. Software Developers and Engineering Teams

The three-tier reasoning mode lets developers tune the trade-off between response speed and analytical depth on a per-task basis. Strong algorithm design capability, fast response times, and automatic unit test generation make it well-suited for algorithmic problem-solving and API development. Teams working on large-scale refactoring or complex multi-agent pipelines may still prefer Claude Opus 4.6 for those specific tasks.

3. Small and Mid-Sized Businesses and Startups

The unchanged pricing structure means organizations can access frontier AI capability without absorbing new cost. The model handles intelligent customer support, batch document processing, and multimodal marketing asset creation — use cases that previously required enterprise-tier budgets. The 65,000-token output limit eliminates the need to chain multiple API calls for longer deliverables.

4. Content Creators and Designers

Native multimodal support allows creatives to complete entire production workflows — drafting, image analysis, video summarization, and SVG animation generation — within a single model interface. Long-context handling enables fast processing of extensive research materials, interview transcripts, and multi-chapter source documents without losing coherence across the session.

How to Get Started with Gemini 3.1 Pro: 6 Access Methods

Option 1: Gemini App or Web Interface (No Setup Required)

Download the Gemini app or visit the Gemini web platform. Sign in with a Google account to access basic features free of charge. Supports text input plus image, video, and audio uploads. Best for general queries, content creation, and casual experimentation — no technical knowledge required.

Option 2: Google AI Studio — Developer API Access

- Visit Google AI Studio

- Sign in with your Google account

- Generate an API key from the dashboard

- Call the Gemini 3.1 Pro endpoint with your preferred language SDK

- New accounts include a free usage tier; billing begins after quota is exceeded

Supports multimodal inputs, batch requests, and all three reasoning modes via API parameters.

Option 3: Google Cloud Vertex AI — Enterprise Deployment

For organizations requiring enterprise SLAs, dedicated compute, compliance controls, and integration with existing cloud infrastructure. Access through Google Cloud Console under the Vertex AI product. Supports custom fine-tuning, private data handling, and high-volume production workloads.

Option 4: Gemini CLI and Google Antigravity — Advanced Developer Workflows

Gemini CLI enables local terminal-based interactions with the model for scripting and automation. Google Antigravity is Google's agent development platform, suitable for building multi-step autonomous workflows. Also integrates with Android Studio for mobile development contexts.

Option 5: NotebookLM — Academic and Research Users

Google's NotebookLM product surfaces Gemini 3.1 Pro capabilities in a research-oriented interface optimized for document analysis, source synthesis, and long-form academic work. Pro and Ultra subscription tiers unlock higher usage limits.

Option 6: Third-Party API-Compatible Platforms

For developers in regions with restricted Google API access, several third-party platforms offer Gemini 3.1 Pro access through OpenAI-compatible API formats, enabling integration without significant code changes.

Note: Gemini 3.1 Pro is currently in preview release. Google is actively collecting feedback on complex agentic workflows before the stable production release. For mission-critical deployments, test thoroughly in a staging environment first.

Gemini 3.1 Pro and the Competitive AI Landscape

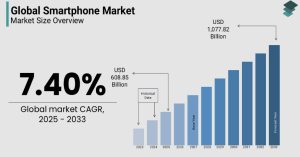

The release of Gemini 3.1 Pro signals a meaningful structural shift in how top-tier AI models compete. Until now, the frontier model market was largely segmented by specialty: GPT series models led on coding, Claude series on agentic capability, and Gemini on multimodal processing.

Gemini 3.1 Pro's simultaneous improvement across all four dimensions — reasoning, context, multimodal, and code — combined with unchanged pricing, applies direct competitive pressure across the entire landscape. Industry analysts expect this release to accelerate upgrade cycles at both OpenAI and Anthropic.

The broader beneficiary, however, is the developer and enterprise ecosystem: when frontier capability becomes more accessible without cost increases, the barrier to building AI-powered products drops — and the pace of real-world AI adoption accelerates.

Frequently Asked Questions

Q: What is Gemini 3.1 Pro's most important benchmark improvement? A: The most significant jump is on ARC-AGI-2, the abstract reasoning benchmark, where Gemini 3.1 Pro scores 77.1% compared to 31.1% in the previous generation — a more than twofold improvement that also surpasses Claude Opus 4.6 (68.8%) and puts it at the top of the academic reasoning leaderboard.

Q: Is Gemini 3.1 Pro more expensive than the previous version? A: No. Pricing is identical to Gemini 3 Pro: $2.00 per million input tokens and $12.00 per million output tokens for sessions under 200,000 tokens. Usage above 200,000 tokens doubles those rates. Developers and enterprises receive substantially upgraded capability at no additional cost.

Q: How does Gemini 3.1 Pro compare to Claude Opus 4.6? A: Gemini 3.1 Pro outperforms Claude Opus 4.6 on abstract reasoning (77.1% vs. 68.8%), graduate-level academic tasks (44.4% vs. 40.0%), and terminal coding (68.5% vs. not disclosed). Claude Opus 4.6 retains an advantage in tool-augmented agentic tasks and large-scale software engineering workflows.

Q: What is the three-tier reasoning mode and how should I use it? A: The Low/Medium/High reasoning mode lets you control the trade-off between speed and analytical depth. Use Low for simple conversational queries, High for complex professional reasoning and research tasks, and Medium for the majority of everyday workflows. This replaces the need to prompt-engineer for depth on a per-request basis.

Q: Does Gemini 3.1 Pro truly support 1 million tokens? A: Yes, the ceiling is 1 million tokens, but performance is most reliable within 128,000 tokens (84.9% information extraction accuracy). Accuracy decreases beyond that range. For most practical use cases — long documents, large codebases, extended research sessions — the 128K stable zone already significantly exceeds what competing models offer.

Q: What is Gemini 3.1 Pro's knowledge cutoff date? A: January 2025. The model does not have awareness of events after that date. For real-time or current-events use cases, pair it with search tool integration or a RAG (Retrieval-Augmented Generation) architecture to supplement its static training knowledge.

Q: Is Gemini 3.1 Pro safe for enterprise use? A: It is suitable for most enterprise applications, but Google has flagged that the model has reached a security “alert threshold” in the cybersecurity domain specifically. Organizations deploying it in security-sensitive or critical infrastructure contexts should implement additional safety filtering layers before production rollout.

Q: When will the stable (non-preview) version be released? A: Google has not announced a specific date. The current preview release is being used to gather feedback on complex agentic workflows. Production-grade deployment should wait for the stable release unless your use case does not depend on advanced multi-step agent capabilities.